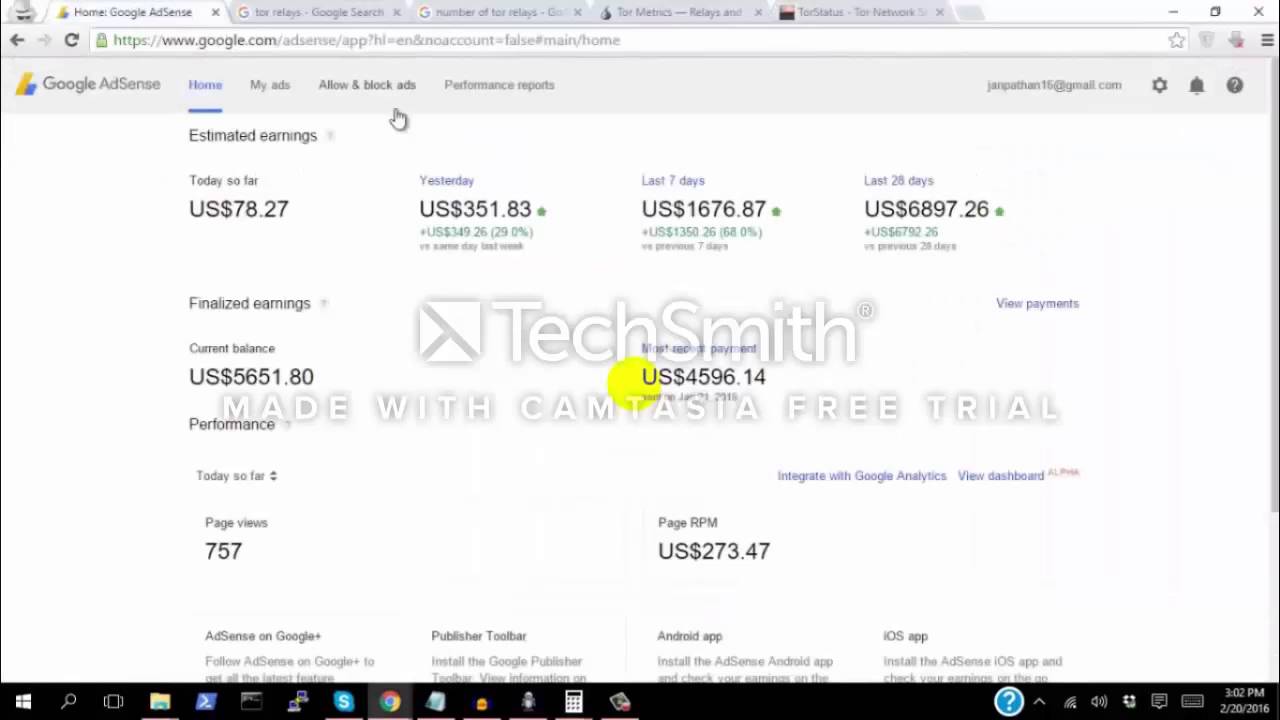

Viewing the formatted deny list should probably be done in a separate page too. I didn't include dynamic writing to htaccess here because I've found it too unpredictable in the past. I suppose you could rotate that file if it gets too large. All it's really doing is checking IP against whatever is stored in the $datFile so it depends how large you allow that to get. I've had no issues on low to medium traffic sites, up to 3000 pageviews, but I have no idea if this will scale well for higher traffic sites. I prefer a hardcoded $datFile path but others may like getCWD(). That should all be able to reside in the include file if valid html and inline css are used. It needs some work to eliminate the need to copy/paste the forms and css into each page. I'm hoping someone here has a little more layout experience than I have and can sanitize and simplify it for use as a global include. It not only prevents wasted server resources by these bots, but it appears to have significantly increased rpm and reduced clawbacks by preventing invalid adsense clicks and impressions. I've been using this example on some of my sites for a while and find it very effective at reducing spambot and clickbot activity.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed